AI Agents in UK Defence: Building an OSINT Command Centre

In my previous post on vibe coding in Defence, I built a simple web app that scrapes GOV.UK pages and plots military range activity on a map. That experiment showed that someone without a software development background could use AI coding tools to build something real and useful. But it was essentially a pipeline — a fixed sequence of scripts that always do the same thing in the same order.

This time I wanted to push further. What happens when you give the AI more autonomy — not just asking it to write code, but asking it to make decisions about what to do next?

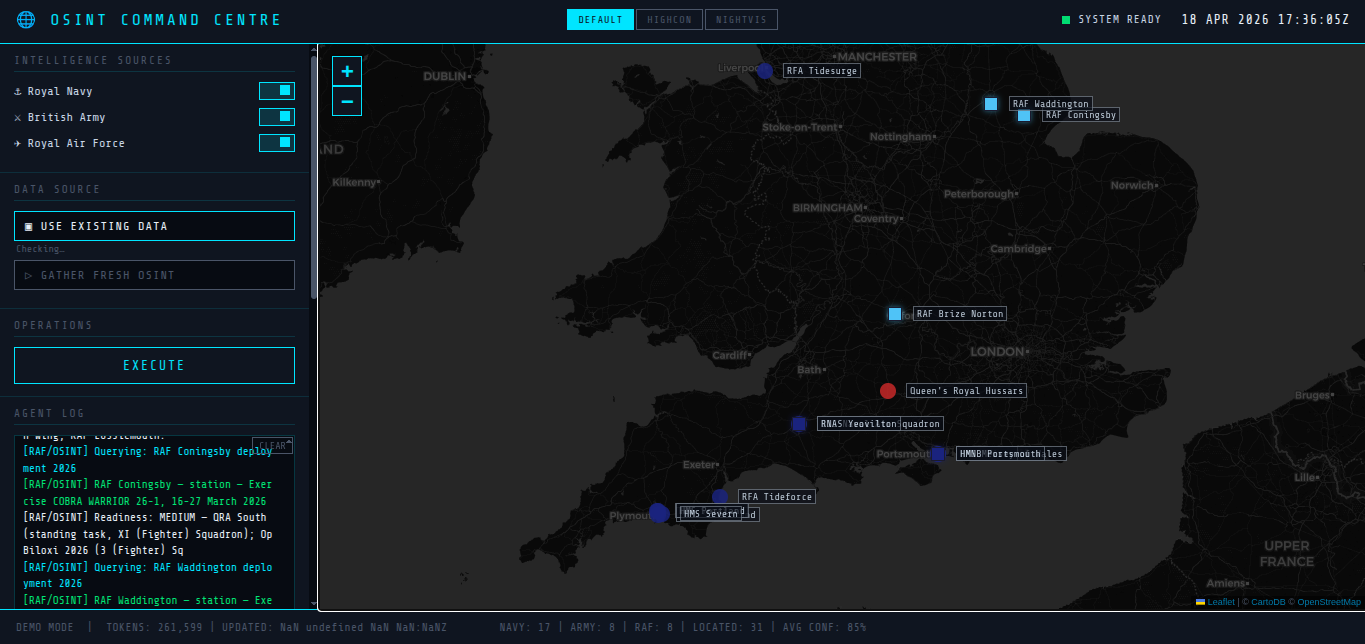

The result is a working prototype of an AI-powered OSINT Command Centre.

You can try it here.

What it does

The tool uses AI agents to gather open-source intelligence about UK military units across all three services. For simplicity's sake, this demo only covers the dispositions of UK units — where they are and what they might be doing — and only a small number of them, rather than attempting anything more ambitious. Each service has a pair of agents: an OSINT agent that searches the web for recent reporting about units, deployments, and exercises, and an analyst agent that enriches what's found with assessments of operational readiness and capability.

The agents use the Anthropic API with tool use, meaning the AI model decides at each step what to do next — which search to run, which page to fetch, what to extract, and when to stop. They're equipped with a BraveSearch API key and follow links to read full articles rather than relying on snippets. This is what makes them genuinely agentic rather than just scripts with an AI call in the middle.

The enriched data is plotted on an interactive map styled to look like a military command centre display. I fed the coding assistant a mock-up image of a futuristic operations room interface and asked it to follow that as a style guide. The version online is static — it shows pre-generated data and doesn't run the agents live.

How I built it

The development process was a conversation between two versions of Claude. I used Claude (the chat assistant) to think through the architecture, refine ideas, and generate detailed instruction documents. Then I fed those instructions to Claude Code, which wrote the actual Python agents, the Flask server, and the frontend. When something didn't work, I went back to Claude to diagnose the problem and implement a fix.

My key insight was that writing good instructions for Claude Code matters more than any single piece of code. Getting the prompt right — being specific about what the agent loop should look like, what the output schema should contain, what the agent should not do — was where the real intellectual work happened. The coding itself was almost a side effect.

What this might mean for Defence

This is only a demo using very limited open-source information. A real application could be connected to authoritative sources — licensed commercial data such as maritime tracking feeds, structured force databases, and potentially privileged information including intelligence about adversaries.

But the interesting thing here isn't about OSINT tools. It's about what happens when both AI-assisted coding and AI agents become widely available across Defence.

One path is to use these capabilities to help accelerate a set of bespoke apps for warfighters — purpose-built tools for specific tasks, developed quickly by digital teams and iterated as needs evolve. That's powerful, but it still assumes a separation between the people who build tools and the people who use them.

A second path is more radical: put the assistive tools themselves — with suitable training, support, and data access — directly into the hands of operators. Let a watchkeeper or an analyst conjure up their own tools iteratively as an operation unfolds, shaping the software to the situation rather than the other way around.

A third option is a hybrid — pairing military operators with forward-deployed engineers who can rapidly prototype tools together, with the operator providing the domain knowledge and operational context while the engineer manages quality, security, and technical risk. Teaming operators with engineers is certainly not a new idea, but the advent of AI-assisted rapid-prototyping could give it fresh urgency. When a working prototype can be built in hours rather than months, the value of having an engineer alongside the operator increases dramatically.

Each of these paths carries risks. If anyone can quickly build something that looks polished and authoritative, how do we maintain quality, accuracy, and trust? How do we govern AI-generated tools that might only exist for the duration of a single operation? How do we ensure that a tool built under pressure doesn't quietly produce credible-looking nonsense?